Sonar Experiment No. 3

This experiment is largely a repeat of Sonar Experiment No. 2.5 and was made possible by my previous upgrade (see Upgrade Complete!) and two modifications. The two special modifications were: (1) collecting video data for each run and (2) collecting ultrasonic range data from both front sensors. Having the video available for each run made it easier to analyze the numerical data. It's possible to follow along in the plots while watching a replay of Brake 'Bot in action. Doing this helped me get a better understanding of how the environment was perceived. This seemed much better than only the range and heading data, as with the previous experiments.

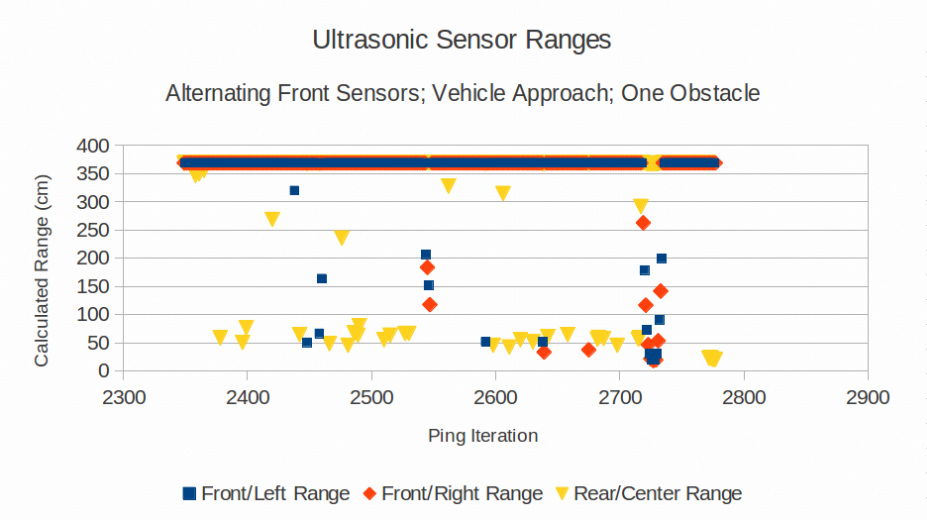

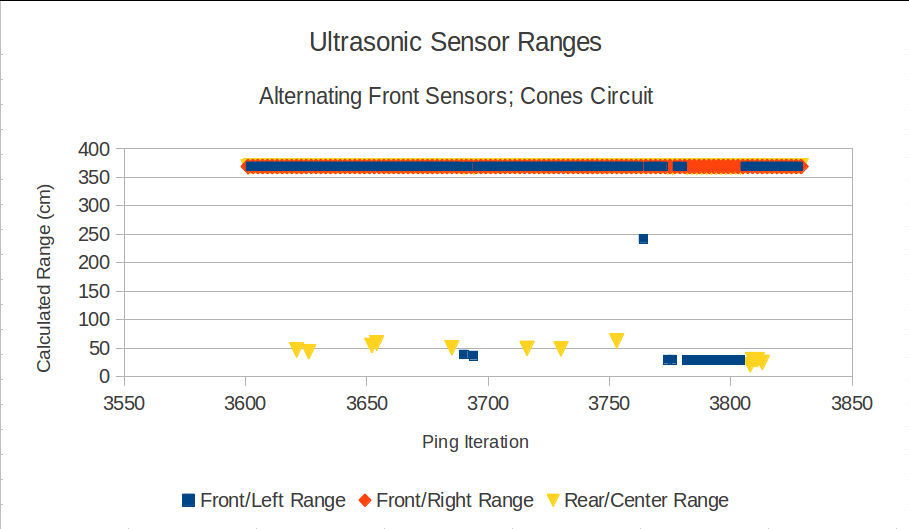

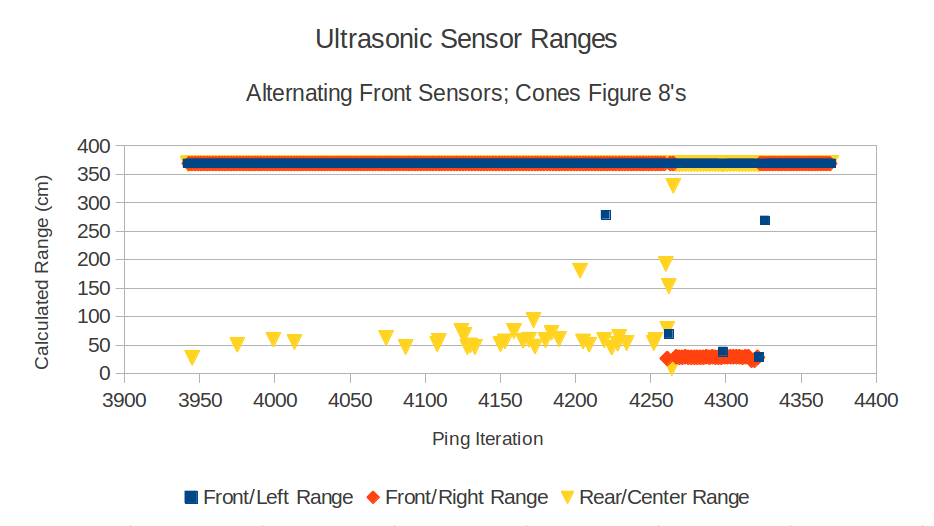

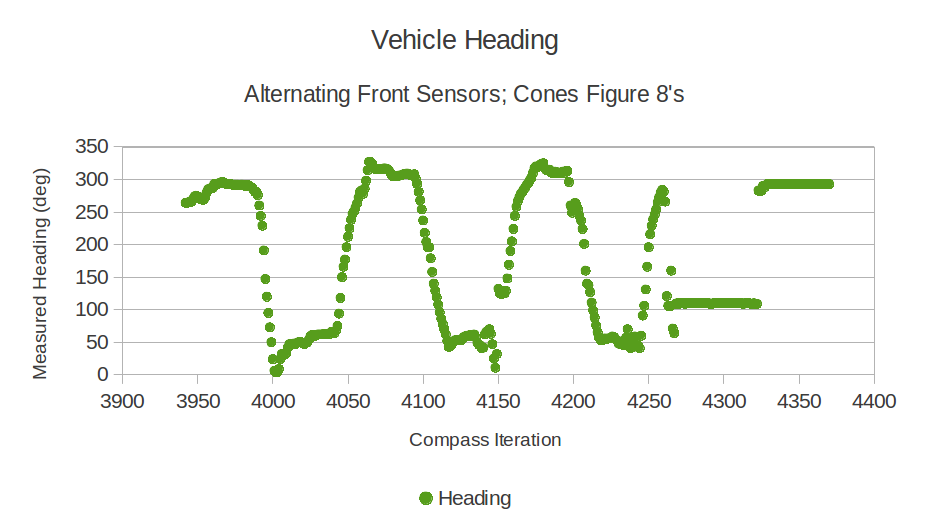

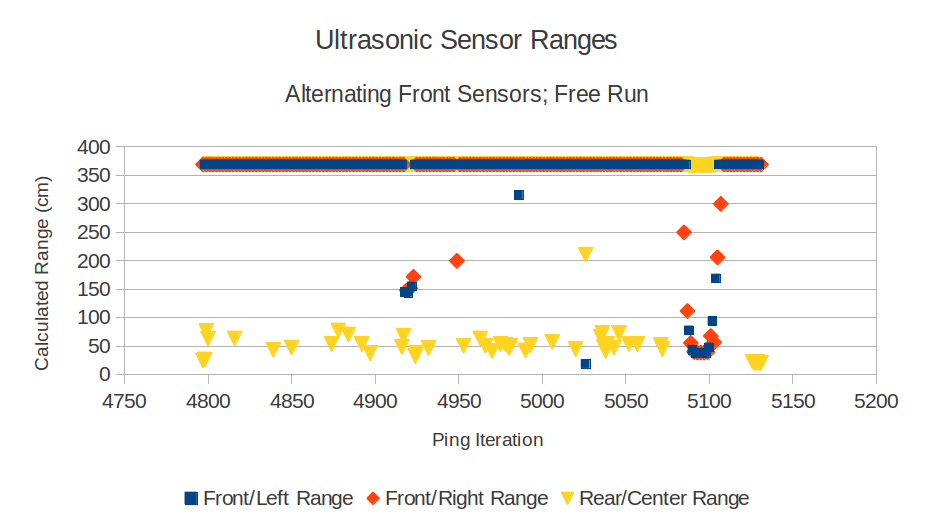

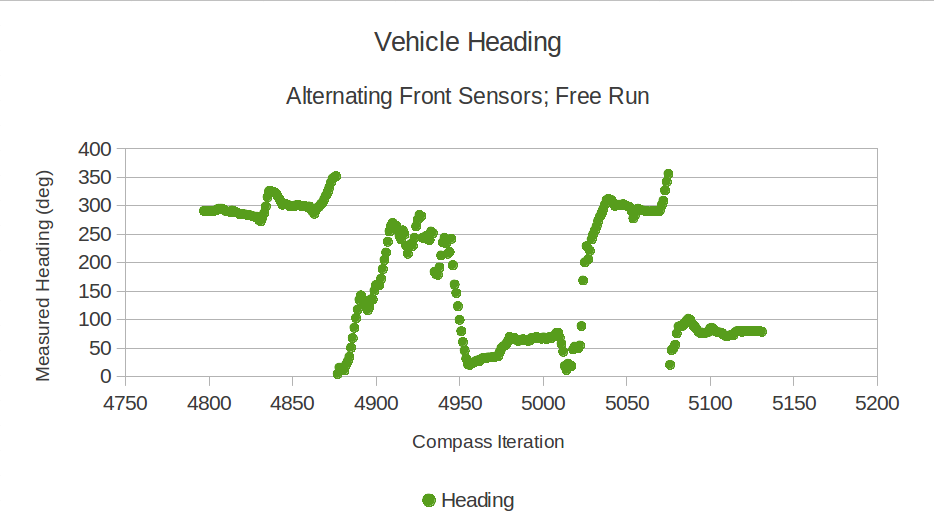

Out of curiosity, I enabled both front sensors to collect range data. Their measurements were alternated for each ping iteration. In other words, for the first iteration it produced measurements from the Front/Left and Rear/Center sensors. Then on the next iteration, it produced measurements from the Front/Right and the Rear/Center sensors. For the following iteration it took readings from the Front/Left and Rear/Center sensors, and continued alternating this way for the entire experiment.

Out of curiosity, I enabled both front sensors to collect range data. Their measurements were alternated for each ping iteration. In other words, for the first iteration it produced measurements from the Front/Left and Rear/Center sensors. Then on the next iteration, it produced measurements from the Front/Right and the Rear/Center sensors. For the following iteration it took readings from the Front/Left and Rear/Center sensors, and continued alternating this way for the entire experiment.

Materials

Here's what I used to conduct this experiment:

[ ] GoPro Hero 3+ Camera

[ ] Brake' Bot - equipped with two front-facing ultrasonic sensors and one rear-facing ultrasonic sensor

[ ] small toolbox - used as my target reflector

[ ] parking lot - with a few objects

[ ] Arduino sketch to log ultrasonic and compass measurements to an SD card (pushAltPingCompassLogger.ino)

[ ] GoPro Hero 3+ Camera

[ ] Brake' Bot - equipped with two front-facing ultrasonic sensors and one rear-facing ultrasonic sensor

[ ] small toolbox - used as my target reflector

[ ] parking lot - with a few objects

[ ] Arduino sketch to log ultrasonic and compass measurements to an SD card (pushAltPingCompassLogger.ino)

| pushAltPingCompassLogger.ino | |

| File Size: | 10 kb |

| File Type: | ino |

Procedure

There were six phases:

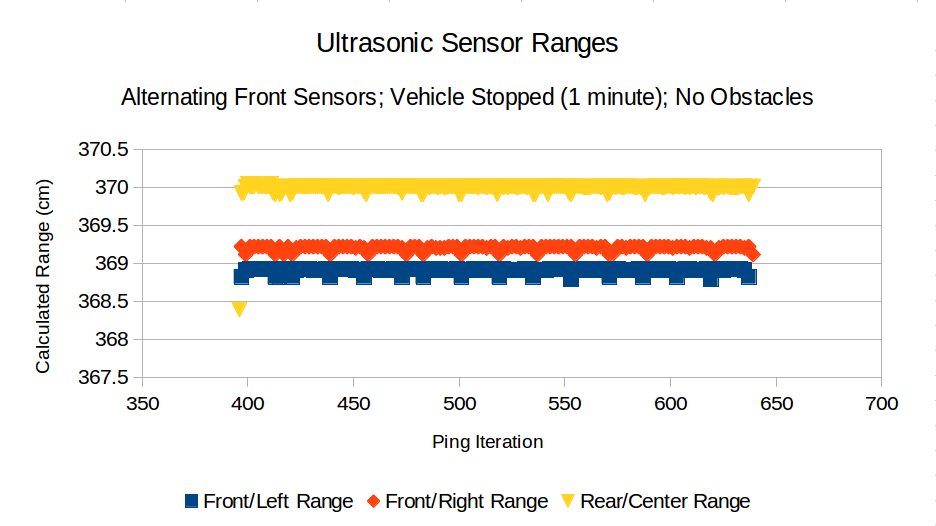

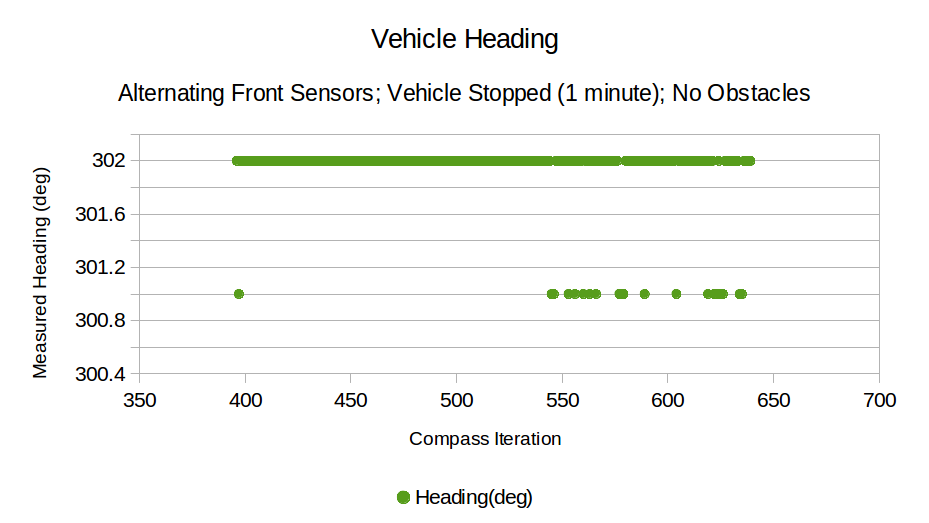

Phase 1: Brake 'Bot was placed in an open area and collected data from both front-facing sensors and the rear-facing sensor. No driving took place and no obstacles were present.

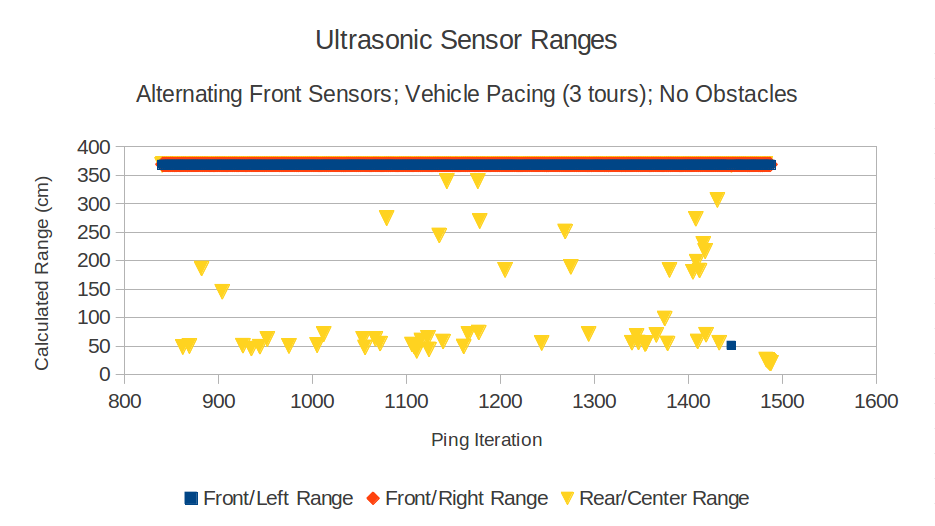

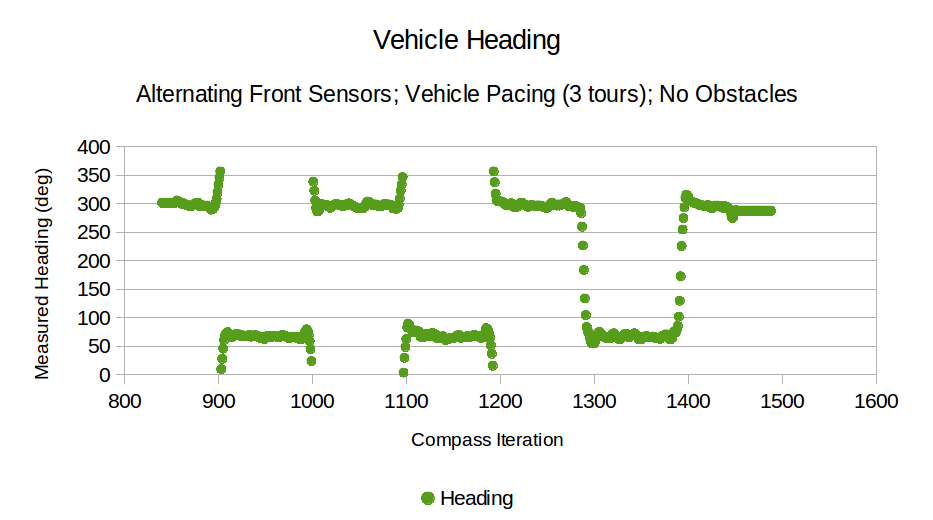

Phase 2: Brake 'Bot was manually driven for three pacing tours. Each tour was driven in a fairly straight path from one of the test area to the other. (After reviewing the video, I realized that my "fairly straight" driving could use some work!)

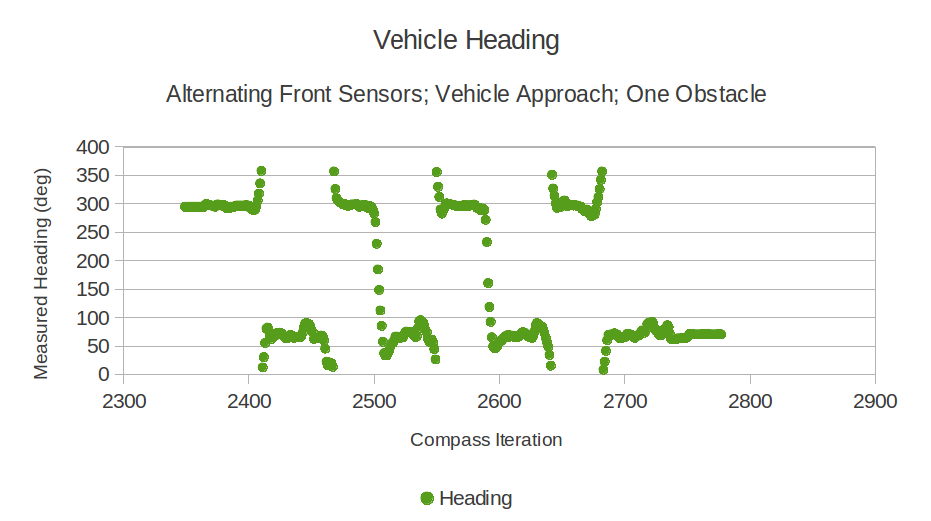

Phase 3: Brake 'Bot was manually driven for four approaches to a target reflector. After each approach, I slowed Brake 'Bot down to execute a controlled turn in front of the target it. Then, I drove back to the start. Instead of using a whiteboard as the target reflector, I used the toolbox since it was windy when I performed the experiment.

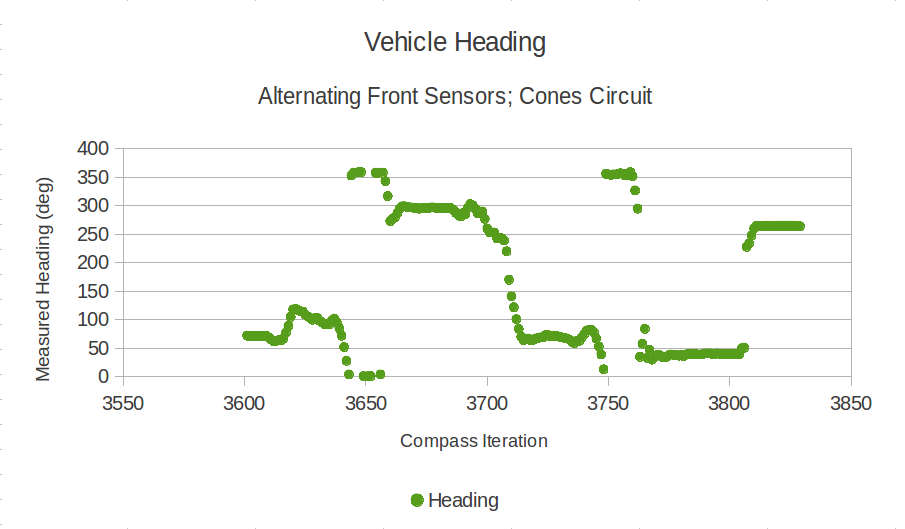

Phase 4: This run was not as formal as the others. I marked off a course with four cones and drove Brake 'Bot around them to see how they would be perceived.

Phase 5: As a follow-up to Phase 4, I drove Brake 'Bot in a Figure-8 pattern around the cones and the toolbox to collect more data when making close approaches various obstacles.

Phase 6: This phase was an undirected free-run. I drove Brake 'Bot wherever I felt like driving it at the time.

Phase 1: Brake 'Bot was placed in an open area and collected data from both front-facing sensors and the rear-facing sensor. No driving took place and no obstacles were present.

Phase 2: Brake 'Bot was manually driven for three pacing tours. Each tour was driven in a fairly straight path from one of the test area to the other. (After reviewing the video, I realized that my "fairly straight" driving could use some work!)

Phase 3: Brake 'Bot was manually driven for four approaches to a target reflector. After each approach, I slowed Brake 'Bot down to execute a controlled turn in front of the target it. Then, I drove back to the start. Instead of using a whiteboard as the target reflector, I used the toolbox since it was windy when I performed the experiment.

Phase 4: This run was not as formal as the others. I marked off a course with four cones and drove Brake 'Bot around them to see how they would be perceived.

Phase 5: As a follow-up to Phase 4, I drove Brake 'Bot in a Figure-8 pattern around the cones and the toolbox to collect more data when making close approaches various obstacles.

Phase 6: This phase was an undirected free-run. I drove Brake 'Bot wherever I felt like driving it at the time.

Observations

Phase 1 isn't very exciting since Brake 'Bot is just sitting there. I included this video and data for completeness. It looks nice in HD, though. Feel free to watch if you want. Or just skip below to Phase 2.

Here's where things got very interesting. The video data helped a great deal because now I could see a visual representation and a sensory representation of Brake 'Bot's obstacle approaches. Here are a few observations from each run:

Run 1 - The left sensor didn't detect the tool box until Brake 'Bot was reversing. The right sensor didn't detect the box at all.

Run 2- The left and right sensors detected the tool box during the left-hand turn in front of it, *but not prior to stopping*!

Run 3 - As before, the left and right sensors detected the toolbox during the left turn in front of it, *but not prior to stopping*!

Run 4 - When making a head-on approach, the front sensors detected the toolbox just fine.

Run 1 - The left sensor didn't detect the tool box until Brake 'Bot was reversing. The right sensor didn't detect the box at all.

Run 2- The left and right sensors detected the tool box during the left-hand turn in front of it, *but not prior to stopping*!

Run 3 - As before, the left and right sensors detected the toolbox during the left turn in front of it, *but not prior to stopping*!

Run 4 - When making a head-on approach, the front sensors detected the toolbox just fine.

This was an unplanned run, but the results are important. This may have to do with the fact that the cones are round, and therefore reflections are unlikely to be returned to an ultrasonic sensor.

This was also an unplanned run. It picks up from the end of the previous cones circuit.

This final run was also unplanned.

Future Work

The "moral of the story" for this experiment is that the ultrasonic sensors need to a head-on view of the target obstacle in order to be most effective for detecting them. If the obstacle is round (like, say a barrel or a cone), then you may not detect it at all. While I reflect more on these ideas, I think I'll start working to automate Brake 'Bot's driving. It's about time I started pulling the human out of the loop.

Raw Data

If you'd like to play around with the data, help yourself to the file below which contains the raw data from all of my runs. The data I used for this writeup are the same as indicated on the YouTube video titles: BPAV-2, 4, 8, 10, 12, and 14. All other data files were related to setting up Brake 'Bot or a random false start. Those remaining files can be safely ignored.

| raw-sensor-data.zip | |

| File Size: | 40 kb |

| File Type: | zip |

Last Update: 24 January 2014