Sonar Experiment No. 2.5

This experiment is a follow-on to Sonar Experiment No. 2. I made a few changes to the electronics (see Upgrade Complete) and performed the same runs as before. I also collected data on a grassy surface to see how the ultrasonic sensor data would differ from the gravel.

Materials

Here's what I used to conduct this experiment

[ ] Brake 'Bot - equipped with two front-facing ultrasonic sensors and one rear-facing ultrasonic sensor

[ ] small whiteboard (24" x 18") - used as my target reflector

[ ] empty parking lot - no cars, no trees, no lamp post; just flat, open space

[ ] Arduino sketch to log ultrasonic and compass measurements to an SD card (pushPingCompassLogger.ino)

[ ] Brake 'Bot - equipped with two front-facing ultrasonic sensors and one rear-facing ultrasonic sensor

[ ] small whiteboard (24" x 18") - used as my target reflector

[ ] empty parking lot - no cars, no trees, no lamp post; just flat, open space

[ ] Arduino sketch to log ultrasonic and compass measurements to an SD card (pushPingCompassLogger.ino)

| pushPingCompassLogger.ino | |

| File Size: | 9 kb |

| File Type: | ino |

Procedure

This experiment proceeded much like its precursor. There were three phases:

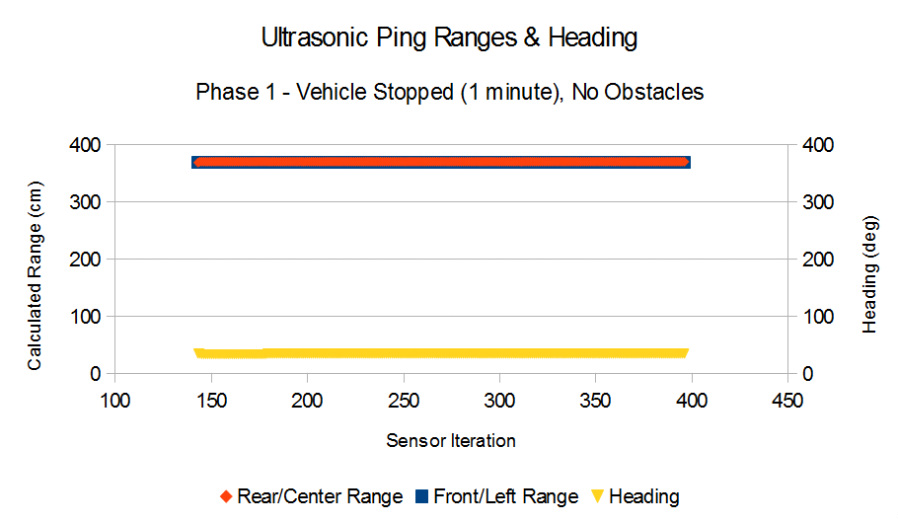

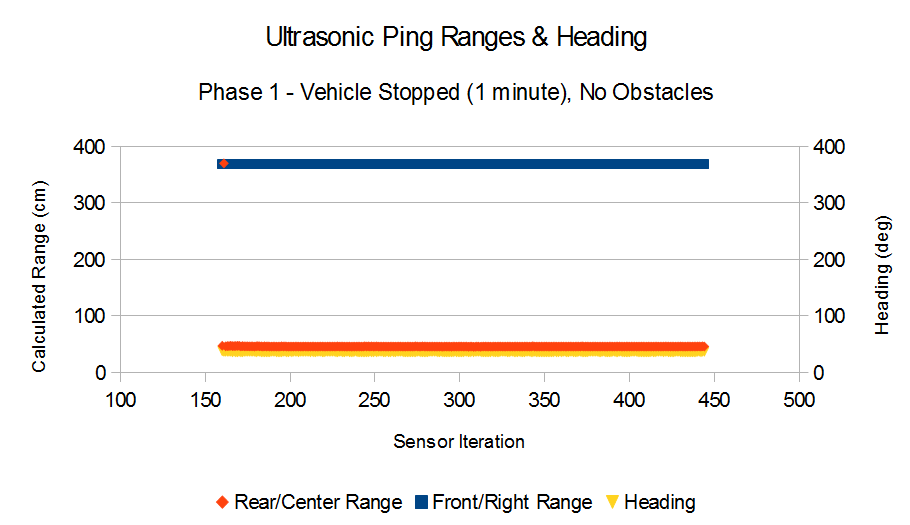

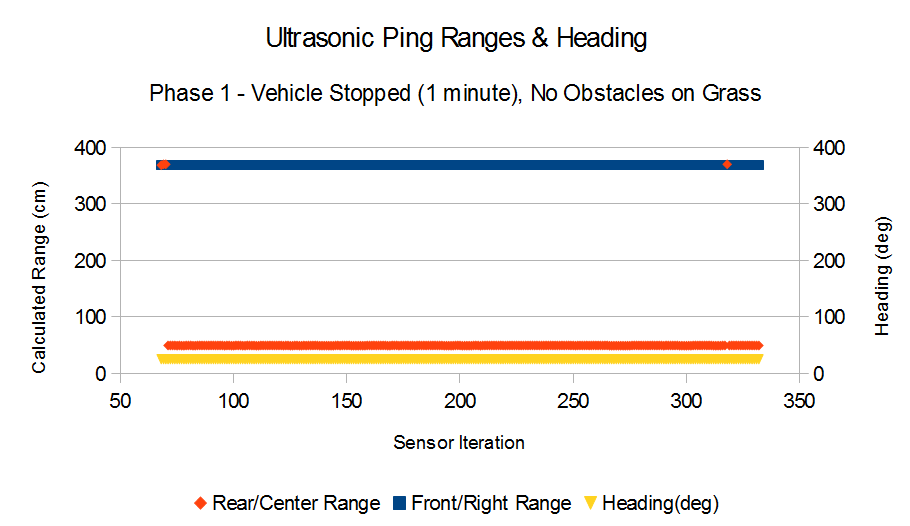

Phase 1: Brake 'Bot was placed in an open area and collected data from one front-facing sensor and the rear-facing sensor. No driving took place and no obstacles were present.

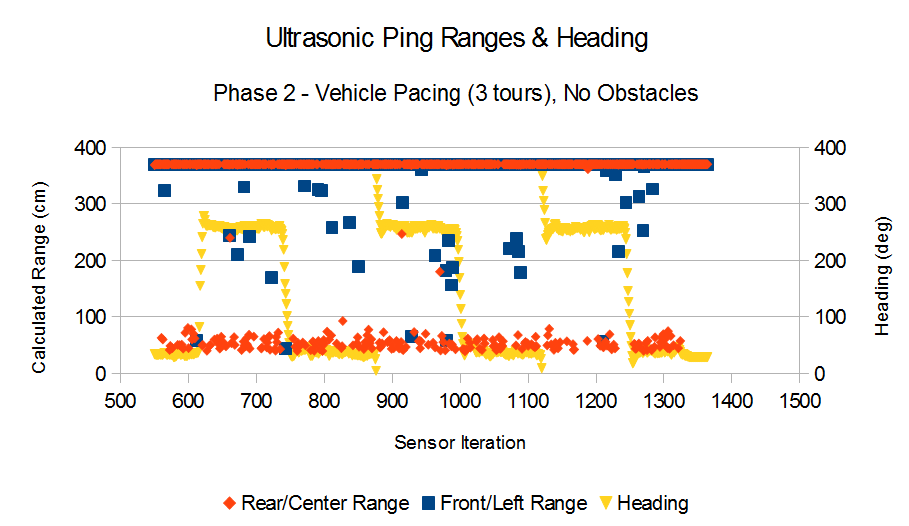

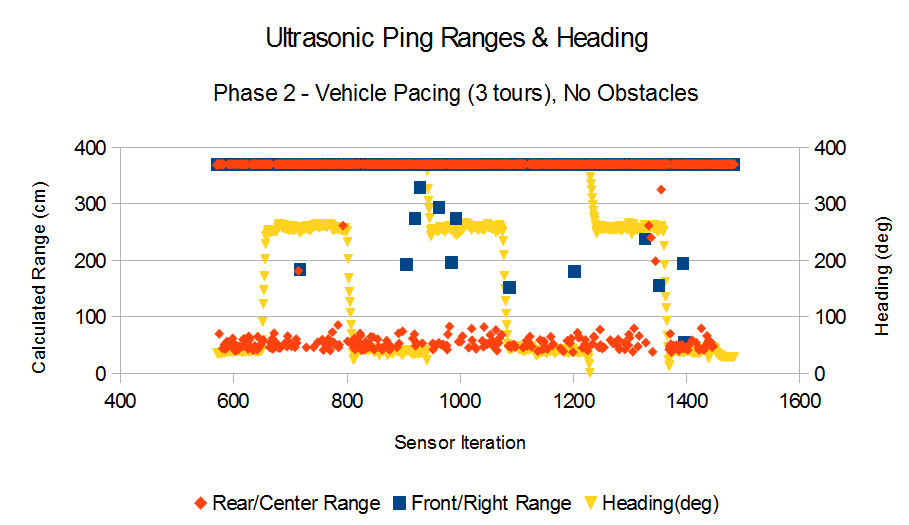

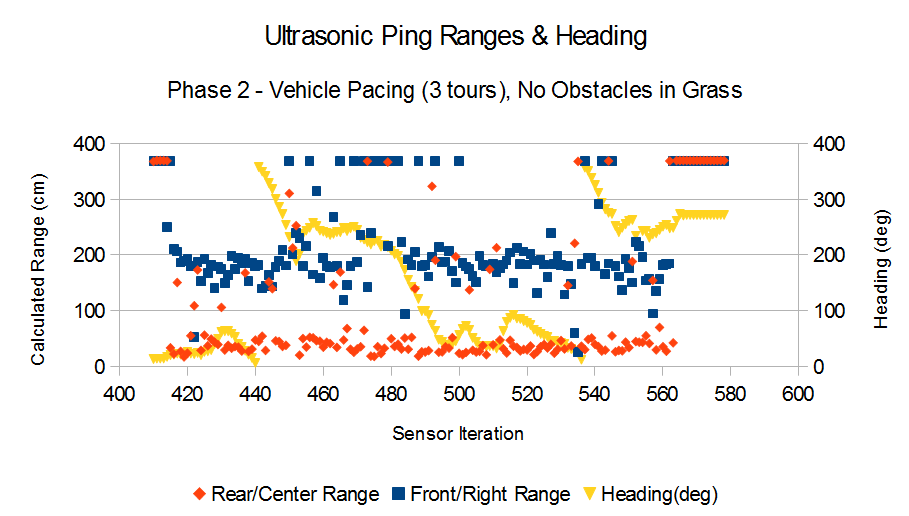

Phase 2: Brake 'Bot was manually driven for three pacing tours. Each tour was driven in a fairly straight path from one end of the test area to the other. I did my best to maintain the vehicle speed around 15 mph (about half its maximum speed). I slowed Brake 'Bot down to execute a controlled turn at the end of each tour.

Phase 3: Brake 'Bot was manually driven for three approaches to a target reflector (a whiteboard propped up by my toolbox). After each approach, I slowed Brake 'Bot down to execute a controlled turn about 30 cm in front of the target. Then, I drove back to the start.

All driving was performed using the stock transmitter and receiver. For each phase, I performed a run with the Front/Left sensor and then another run with the Front/Right sensor. As before, I used a spreadsheet program to plot the data.

After collecting data on the gravel, I performed Phases 1 and 2 on a grassy field just to get an idea for how the sensors would behave. I'll have to perform Phase 3 on the grassy field another day when the wind isn't as gusty.

Phase 1: Brake 'Bot was placed in an open area and collected data from one front-facing sensor and the rear-facing sensor. No driving took place and no obstacles were present.

Phase 2: Brake 'Bot was manually driven for three pacing tours. Each tour was driven in a fairly straight path from one end of the test area to the other. I did my best to maintain the vehicle speed around 15 mph (about half its maximum speed). I slowed Brake 'Bot down to execute a controlled turn at the end of each tour.

Phase 3: Brake 'Bot was manually driven for three approaches to a target reflector (a whiteboard propped up by my toolbox). After each approach, I slowed Brake 'Bot down to execute a controlled turn about 30 cm in front of the target. Then, I drove back to the start.

All driving was performed using the stock transmitter and receiver. For each phase, I performed a run with the Front/Left sensor and then another run with the Front/Right sensor. As before, I used a spreadsheet program to plot the data.

After collecting data on the gravel, I performed Phases 1 and 2 on a grassy field just to get an idea for how the sensors would behave. I'll have to perform Phase 3 on the grassy field another day when the wind isn't as gusty.

Observations

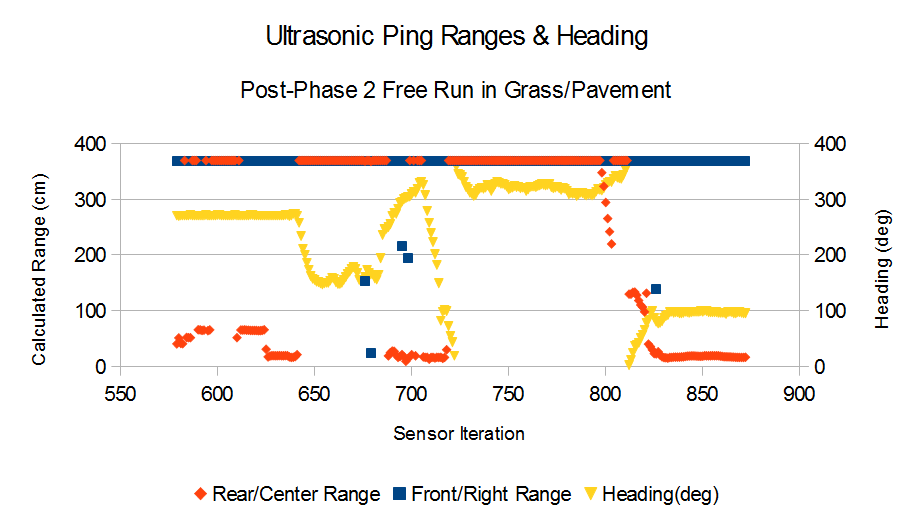

The plots are slightly different from the previous experiment's plots. I swapped the data point symbols for the front and rear sensor values. I also added heading information from the onboard compass.

The fact that the rear sensor was detecting the ground for one run and not the other is rather strange. Brake 'Bot's chassis didn't appear to sag during either run. Nonetheless, I want to identify exactly why this happened. I may also have to adjust the suspension again, angle the rear sensor upward, or something else altogether. My 'Future Work' list continues to grow.

The data below were collected while I paced Brake 'Bot back and forth within the test area. As before, there were no obstacles (except a few passing leaves on the ground). Notice the changes in heading as I (1) complete a tour, (2) then turn Brake 'Bot around, and (3) then start a new tour in the opposite direction. The increasing heading values show right-hand turns, and decreasing heading values show left-hand turns.

I'm not exactly sure what the front sensors were detecting. I'm doubtful that they all correspond to detected leaves

since the leaves weren't large and were sparsely distributed on the gravel. All of the range values reported below 150 cm for the front sensor appear tame, though. Below that range, there is no clustering. Will I need to make Brake 'Bot "short-sighted" in order to avoid the sporadic readings whenever driving on gravel? Or perhaps I could filter the range data so that only a legitimate "impending collision" data profiles would dictate the need to maneuver (either brake or swerve). I'll have to think more about this. 'Future Work'++.

since the leaves weren't large and were sparsely distributed on the gravel. All of the range values reported below 150 cm for the front sensor appear tame, though. Below that range, there is no clustering. Will I need to make Brake 'Bot "short-sighted" in order to avoid the sporadic readings whenever driving on gravel? Or perhaps I could filter the range data so that only a legitimate "impending collision" data profiles would dictate the need to maneuver (either brake or swerve). I'll have to think more about this. 'Future Work'++.

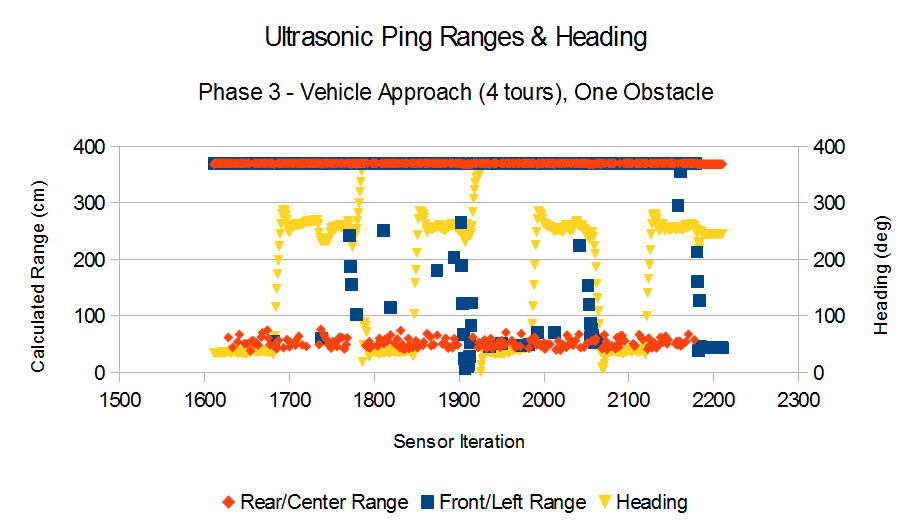

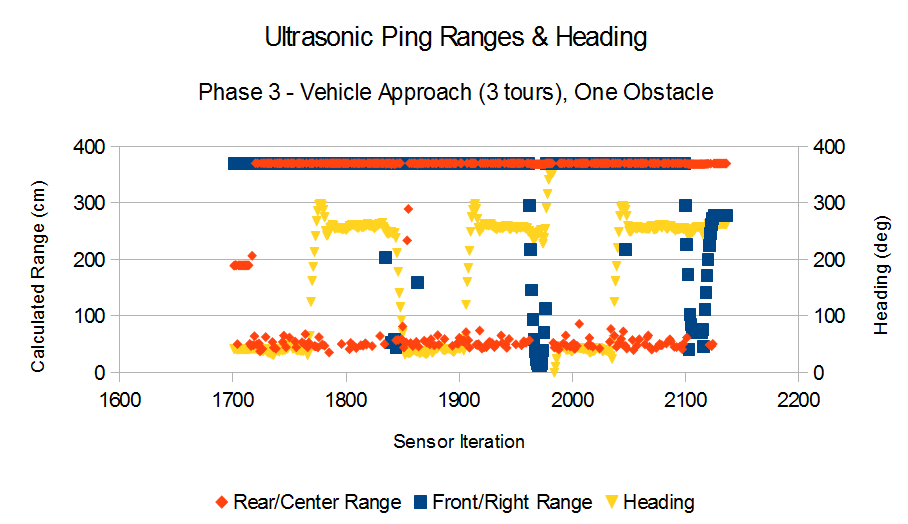

The data below was collected while Brake 'Bot made approaches to the target object - a whiteboard propped up by a toolbox.

The key observations for this phase are the pronounced "impending collision" data profiles. The front sensors seem to track the range to the target quite reliably. As I mentioned earlier, I may have to write code to look for these trends so that Brake 'Bot could reliably detect a potential collision. How Brake 'Bot will respond to potential collisions is a whole other area I'll have to investigate.

Just for kicks, I collected data while on a grassy field. The grass wasn't thick; I could maneuver Brake 'Bot without stressing the drive motor.

Only the Front/Right and Rear/Center ultrasonic sensors were used to collected range data. Here is how they turned out.

One thing I noticed will driving Brake 'Bot on the grass was that there were a lot of bumps. The suspension was very active during the run. However, since I added spacing clips to compress the suspension springs, Brake 'Bot likely had a stiffer ride than usual.

For my final run, I drove Brake 'Bot around on the grass and then transitioned to a paved parking lot surface. The sensor readings are much more stable.

For my final run, I drove Brake 'Bot around on the grass and then transitioned to a paved parking lot surface. The sensor readings are much more stable.

Future Work

I certainly have my work cut out for me. But I'm confident that using ultrasonic sensors would be useful as I continue to develop the collision avoidance capability. To get there, I'll likely have to accomplish the following:

Decide what to do about the rear-facing sensor.

Automate Brake 'Bot's driving. It should be able to drive in a particular direction for a preprogrammed length of time. I'll probably need to couple Brake Bot's steering with the compass sensor.

Develop an automated braking capability. This feature might be a simple brake maneuver at first (obstacle detected > execute brake maneuver). Then it might progress into something more active (obstacle detected > sense object while braking / adjust maneuver as required).

Update the PingRange sketch to alternate between the two front-facing sensors and conduct an experiment to see how this arrangement performs. Is it practical?

--

My carry-over tasks that I have yet to complete are: (1) use a Python script to automate data plotting (from Sonar Experiment No. 2) and (2) characterize a more expensive sonar sensor (from Sonar Experiment No. 1).

Decide what to do about the rear-facing sensor.

Automate Brake 'Bot's driving. It should be able to drive in a particular direction for a preprogrammed length of time. I'll probably need to couple Brake Bot's steering with the compass sensor.

Develop an automated braking capability. This feature might be a simple brake maneuver at first (obstacle detected > execute brake maneuver). Then it might progress into something more active (obstacle detected > sense object while braking / adjust maneuver as required).

Update the PingRange sketch to alternate between the two front-facing sensors and conduct an experiment to see how this arrangement performs. Is it practical?

--

My carry-over tasks that I have yet to complete are: (1) use a Python script to automate data plotting (from Sonar Experiment No. 2) and (2) characterize a more expensive sonar sensor (from Sonar Experiment No. 1).

Raw Data

If you'd like to take a look at or play around with the data, help yourself to the raw data I collected. I used the same file naming scheme as in Sonar Experiment No. 2, which goes something like this: LGVL stands for Left sensor on a Gravel surface; RGRS stands for Right sensor on a Grass surface.

The odd-numbered data files were collected while I was repositioning the vehicle to setup for the next experiment phase. The values in those files can be ignored. Be sure to look at the Arduino sketch to see exactly what I did. If you have any questions or suggestions for improvement, give me a shout!

The odd-numbered data files were collected while I was repositioning the vehicle to setup for the next experiment phase. The values in those files can be ignored. Be sure to look at the Arduino sketch to see exactly what I did. If you have any questions or suggestions for improvement, give me a shout!

| raw-sensor-data.zip | |

| File Size: | 35 kb |

| File Type: | zip |

Last Update: 29 December 2013